In this piece, I’ll share how we’ve embraced this approach, why it matters, and how any software company, regardless of size or maturity, can apply it to accelerate innovation and execution.

Where and how did it begin for me?

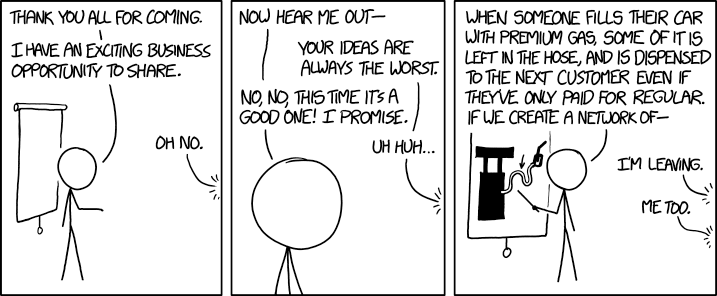

With the release of tools like Cursor, Lovable, V0, and others, I began noticing something remarkable: people from every discipline were suddenly empowered to prototype their own ideas, whether or not they had any programming experience. This democratization of creation signaled that software was no longer the exclusive domain of engineers, but a playground for anyone with imagination.

One of the most striking examples in my circle came from a heart surgeon. He showed me a product he had built on Lovable to streamline requests for doctors’ availability at one of the hospitals where he worked. If a surgeon with no formal software background could design and deploy a functional tool, then the rules of who gets to innovate had clearly changed. The barrier to entry had collapsed, and that meant the dynamics inside companies would soon follow.

At the time, many engineers I spoke with were skeptical. The code generated by these tools wasn’t production-ready (spoiler: it still isn’t). But what mattered wasn’t perfection, it was acceleration. And as the tools matured, I saw even experienced engineers adopting them as idea validators, feasibility checkers, and code accelerators. That shift showed me the future wasn’t about replacement, it was about leverage.

At Codelitt, we’ve always defined ourselves as builders. My conversations with CEOs, product managers, and entrepreneurs revolve around how to turn vision into reality. Soon, I was using these tools myself: spinning up new projects, stress-testing features, salvaging what worked, and discarding the rest. What once required teams and budgets now required only curiosity and a willingness to experiment. The economics of innovation had changed.

This shift transformed our enterprise work as well. I started creating new functionalities without seeking funds or teams, because the cost of experimentation had become almost zero. From there, I interviewed users to uncover which ideas had real traction. That process was the real eye-opener: it wasn’t just about building faster, it was about discovering sooner. For me, that was the moment “AI-first teams” became less of a trend and more of a blueprint for the future.

What is an AI first team?

An AI-first team is one that begins every task with a simple expectation: AI will handle the bulk of the work. Instead of treating AI as an optional add-on, these teams design their workflows so that tasks can be delegated to AI agents first, leaving the human team to focus on reviewing, refining, and guiding the results. The mindset is not about replacing people, but about reframing what human effort is worth spending on.

This approach fundamentally changes the rhythm of work. By leveraging AI as the first draft generator, an AI-first team can shorten feedback loops, test multiple solutions in parallel, and iterate in serial at a fraction of the time. They are not bound by the traditional constraints of bandwidth, resourcing, or even specialized expertise. Instead, they move with the assumption that the bottleneck is no longer execution, it’s judgment.

The takeaway is clear: the defining strength of an AI-first team is not speed alone, but the ability to explore more possibilities in less time. And in a world where innovation is as much about discovering the right solution as building it, that advantage compounds quickly.

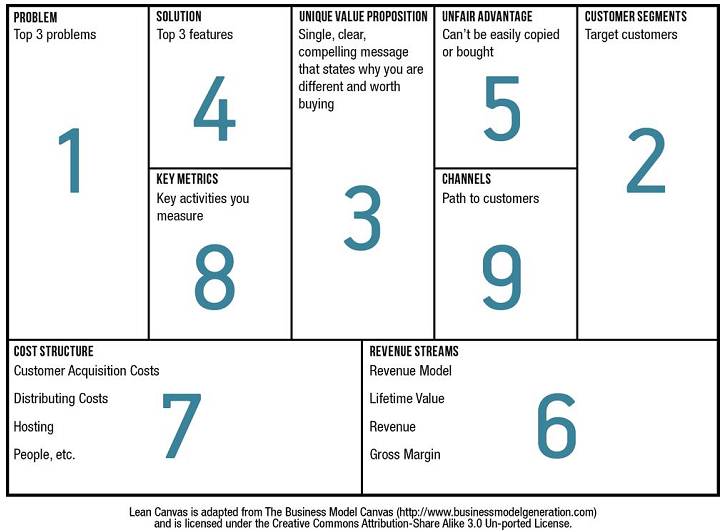

What is the goal of an AI first team?

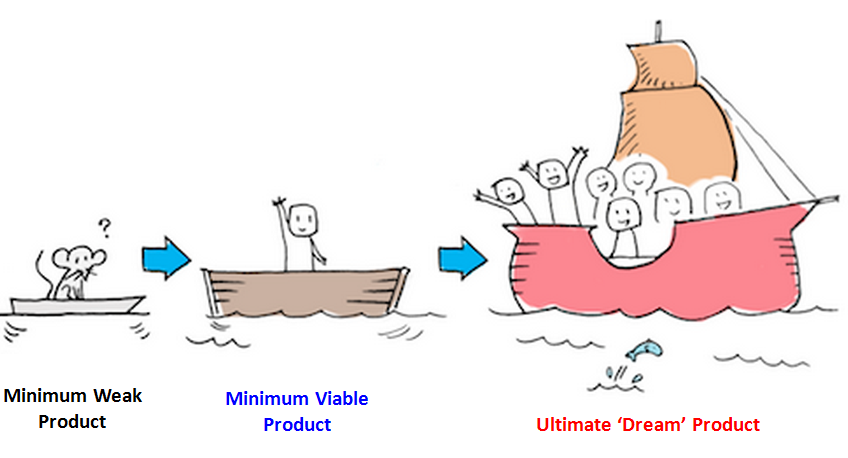

The primary goal of an AI-first team is experimentation. Rather than committing early to a single path, these teams explore a wide range of possible solutions, quickly generating and testing variations until they find one that delivers the right balance of value and feasibility. In this sense, the process isn’t fundamentally different from conventional development, it still requires iteration, validation, and refinement.

What changes is the scale and speed of exploration. AI-first teams can afford to try more ideas, faster, because the cost of each experiment is drastically reduced. However, this approach is not universal. There are scenarios where applying AI-first methods introduces drawbacks, such as complexity, technical debt, or risks in critical systems, that can outweigh the benefits. Knowing when not to apply AI-first thinking is just as important as knowing when to lean into it.

The real lesson is this: the goal of an AI-first team is not to replace traditional development, but to extend its reach. By making experimentation cheaper and more accessible, these teams shift the focus from whether something can be built to which of many possible solutions is worth building.

When to build an AI First Team and where to deploy it?

In my experience, projects with extremely high code complexity are not yet a good fit for AI-first teams. Current AI models still struggle with the sheer amount of context required in these environments, making them more prone to hallucinations, brittle solutions, and unintended side effects. In such cases, the cost of error often outweighs the speed of exploration.

The strongest use cases for AI-first teams today are in new initiatives where experimentation is the priority, or in projects with relatively low code complexity. In these contexts, AI can accelerate the creation of first versions, generate multiple approaches, and help teams move from concept to prototype at an unprecedented pace. Through building projects from scratch with AI, I’ve consistently found that the earliest functionalities are easy to implement, but as the system grows, the models often start breaking or rewriting older code. This reinforces a clear pattern: today’s AI is best suited for exploration, not maintenance.

The takeaway is important for leaders: AI-first teams thrive at the frontiers of innovation, where the goal is discovery and validation. They are less effective in highly complex, long-lived systems where stability and continuity matter most. In other words, they are not a wholesale replacement for traditional teams, but a powerful complement when speed, experimentation, and optionality matter more than perfect reliability.

What is the composition of an AI first team?

The short answer is that we don’t yet have enough data to define a single “ideal” AI-first team structure. What we do know, however, is the set of roles and resources that are essential for these teams to function.

It’s important to note that two AI-first teams can have the same composition on paper and still deliver completely different outcomes. The differentiator is not who sits on the team, but the degree of freedom they have to experiment, combined with the clarity of the goals they’re working toward.

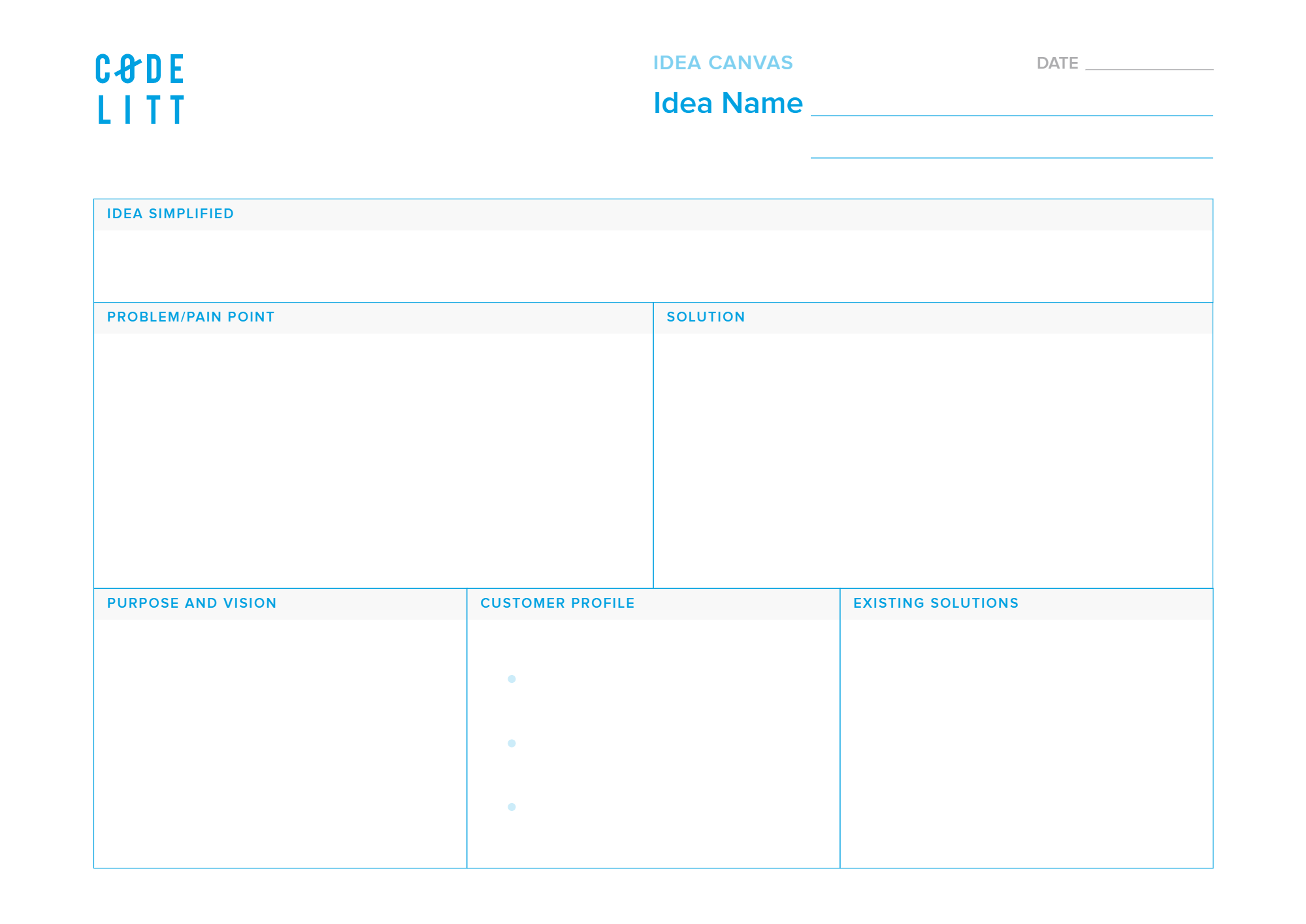

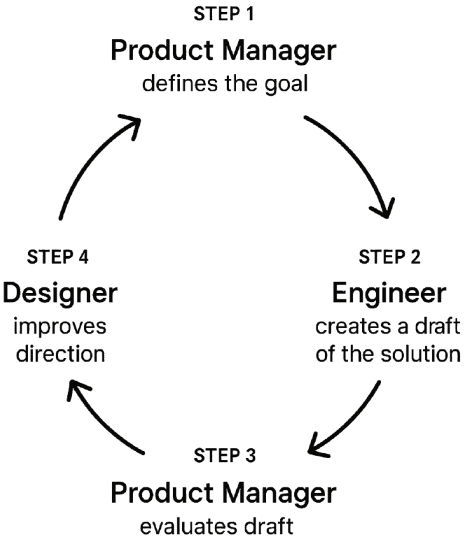

1. Product Manager

Every AI-first team begins with clarity of purpose. The Product Manager is responsible for defining what success looks like, what the team is aiming for in each iteration, and how progress will be measured. In this model, vague ambition is dangerous. Clear, sharp goals are the safeguard against wasted effort. A Product Manager who fails to define these boundaries sets the team up to chase everything, and ultimately achieve nothing.

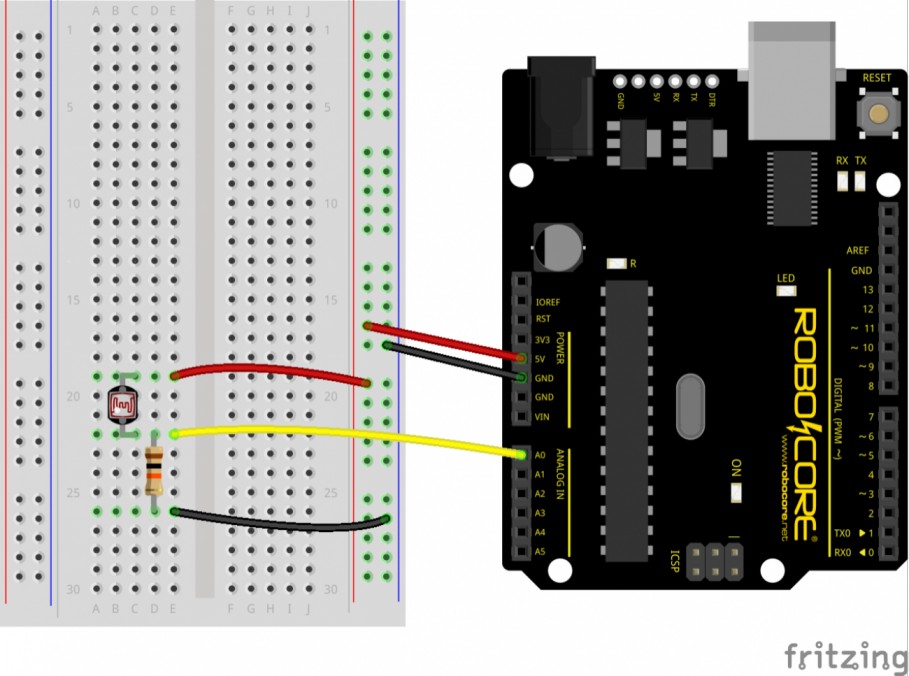

2. Engineer

The Engineer is the builder of first versions. Their role is to take the existing codebase (if there is one), explore multiple approaches, and use AI as a force multiplier to rapidly generate prototypes. Once they land on something that resembles a solution, it’s presented to the Product Manager for validation. Only after that checkpoint does the design layer come into play.

In AI-first teams, the Engineer’s value lies less in perfecting code and more in orchestrating experiments that create viable starting points.

More often than not, the Engineer will be the one proposing the first UI options through the usage of models.

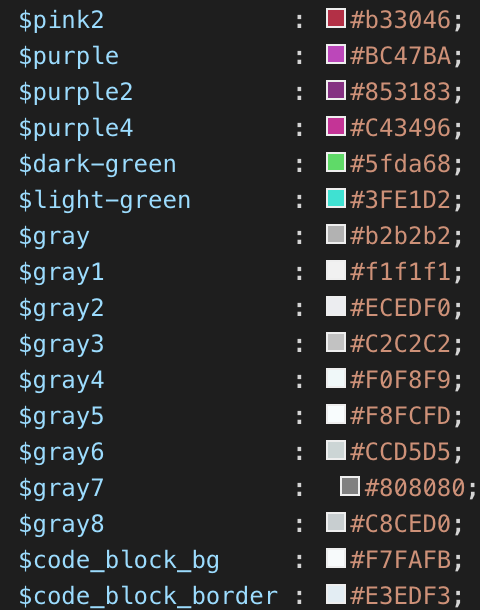

3. Designer

Here is where direction meets experience. An AI-first team needs a clear vision to guide experimentation, but before anything goes to production, especially in more stable products, the Designer must step in. Models can suggest layouts or even generate compelling page designs, but user flow and overall experience require human judgment. That’s where the Designer becomes critical.

Traditionally, designers shaped the user experience from the very beginning. In an AI-first team, the order flips. Instead of defining everything upfront, the Designer evaluates what the Engineer and AI have produced, then realigns it. Sometimes this means small refinements; other times, it requires breaking everything and starting again with sharper guidance.

Designers in AI-first teams are not blueprint makers, they are course correctors. Their role ensures that what AI helps create is not only fast, but also right: not just functional, but usable and genuinely valuable.

What is the Best Environment for an AI-First Team?

AI-first teams don’t thrive everywhere. Their effectiveness depends heavily on the environment they operate in. From my experience, the most productive conditions for an AFT include three key elements: simplicity of codebase, space for iteration, and clarity of goals.

1. A Simple or Non-Existent Codebase

The smaller and less interconnected the codebase, the better. Current AI models remain limited in terms of context, they struggle when required to handle large systems with many dependencies. In projects that span multiple repositories, the cognitive load for AI grows exponentially, creating more opportunities for errors and regressions. Developers can and must course-correct, but the overhead quickly dilutes the speed advantage.

AI-first teams excel in greenfield projects or lightweight systems, where complexity doesn’t choke the AI’s ability to generate useful contributions.

2. Room for Iteration

The first solution generated by an AI-first team is rarely the one that makes it to production, and that’s by design. The real strength of this model lies in rapid iteration: define a goal, generate a solution, evaluate it, gather feedback, and try again. This cycle needs to be embraced, not resisted. Leaders must understand that early outputs are drafts, not final products.

3. Clear Goals, Not Prescribed Paths

AI-first teams need guidance, not micromanagement. Success comes from leaders setting measurable, outcome-driven goals, what to achieve, not how to achieve it. Without this clarity, experiments risk becoming unfocused, and any result may seem acceptable. Precision in defining goals keeps the team aligned while still giving them the freedom to explore.

Worst Environment for an AI-First Team

Not every context is suitable for an AI-first approach. In fact, there are environments where the drawbacks outweigh the benefits, and forcing an AFT into them can slow progress rather than accelerate it.

1. Massive, High-Debt Codebases

When the codebase is enormous or burdened with significant technical debt, the AI struggles to navigate. The complexity of patterns, inconsistencies, and legacy decisions makes it hard for both humans and AI models to understand what goes where. In such cases, AI-generated code often introduces more risk than value, compounding the very problems teams are trying to solve.

2. Big amount of microservices and interconnected tools

When the codebase is composed by many microservices and interconnected tools, the AI struggles to understand the context and the dependencies between them. This makes it hard for the AI to generate useful contributions, and hard to make the changes in all the necessary places.

3. High amount of dependencies between codebases

When the codebase is composed by many dependencies between codebases, the AI struggles to understand the context and the dependencies between them. This makes it hard for the AI to generate useful contributions.

Final thoughts

Moving forward, I intend to use this approach in every project where the goal is to experiment and find the right solution. This approach is by no means a silver bullet, and in my opinion, AI should be used as yet another tool, and not like a silver bullet.

]]>